Building products and services using Google Cloud Platform (GCP) has been a big ingredient in Credit Karma’s hyper growth. GCP’s PaaS and SaaS offerings allow teams and individuals to focus on their real goal and deliverables vs building and maintaining infrastructure components from scratch. Leveraging GCP is very straightforward in pet projects and POCs. But in contrast it can be more complicated when dealing with complex deployments and interconnected mechanics.

By the end of this article, you should have a better understanding of how to think about GCP access management for your current and future design and implementations.

Identity and Access Management (IAM)

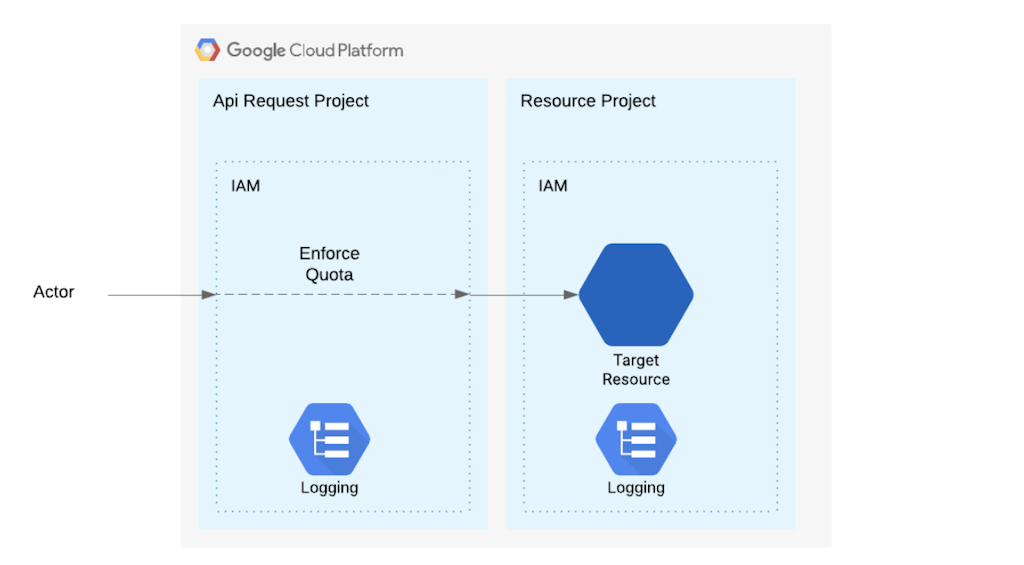

In GCP land every resource has a dedicated or inherited IAM Binding. Those policies decide if a given actor can perform the desired operation on the target resources.

In GCP the project hosting the target resource may be different from the project receiving the API requests. The project receiving the api requests is responsible for applying IAM policies and allowing resource usage (e.g. number of calls per minute). So most of the API request’s logs will end up in the API request Project. On the other hand when the target resource lives in a different project aka ResourceProject and IAM hierarchy is applied from ResourceProject’s location perspective. You can find more details about GCP hierarchy at https://cloud.google.com/resource-manager/docs/cloud-platform-resource-hierarchy

In the following there is a sample code to show how to change the request API project:

const {Storage} = require('@google-cloud/storage');

const conf = {projectId: “API Project” } //OR host project if not specified

const storage = new Storage(conf);

const stream = storage.bucket(bucketName).file(filename).createReadStream()

Quick Tip: When hitting a quota or looking for logs that simple fact is going to help navigate and find proper projects and resources.

This a very rough order of execution for API calls

- Actor makes an API call

- ApiRequest goes to ApiRequestProject

- ApiRequestProject IAM check for Quota and some IAM

- ResourceProject enforces resource permissions

Note: In simpler deployments, most of the time the ApiRequestProject and ResourceProject are the same project.

Google Identities

Anytime a process/actor tries to reach and perform an operation on any GCP resources, we need to include the actor’s identity in every request. In the following lets take a look at available options:

- User: USERNAME@corp.com (GSuite or Gmail)

- Group: GROUPNAME@corp.com (GSuite or Gmail)

- ServiceAccount: service-x@PROJECT_ID.iam.gserviceaccount.com

- Domain: corp.com (GSuite or Gmail)

- allAuthenticatedUsers: Special identifier for all Google account holders (HighRisk)

- allUsers: Special identifier for all users (HighRisk)

- API Key: Special cases https://cloud.google.com/docs/authentication/api-keys

Regardless of where a process is running (e.g. developer’s machine, GCE, Kubernetes container, even in AWS) an identity needs to be assumed by it. There are two distinct ways for a running process to assume a GCP identity: Application Default Credential or Service Account Keys (API Keys are out of scope of this article and not recommended).

Application Default Credentials

In this scenario the process uses the identity available in the running environment. The separation of concerns between the process and the identity management will allow developers to focus on the actual task without worrying about secret management, key rotations and identity changes. In addition, it allows operations and security teams to manage keys or identities independent of dev cycle.

In GCP terminology, the Application Default Credentials (ADC) is probably the easiest and safest way to pass identity to server processes – Run the processes on a host with default identity present there, and All Google SDKs will know how to pick them up and use them.

Example:

const {Storage} = require('@google-cloud/storage');

const storage = new Storage();

storage.getBuckets()

Developers’ Machines

On a developer’s machine run the following command once, and go through the OAuth process with a personal account. At the end of that the `gcloud` utility is going to create an OAuth token file in ~/.config/gcloud/application_default_credentials.json.

$ gcloud auth application-default login

Some IT organizations enforce additional policies which require frequent reauthentication. For example, an organization may decide to issue tokens for 4 hours, in that case after the 4th hour the developers/operators need to rerun that command.

Docker Containers tip: In case of running the application inside docker containers, the magic trick to leverage that identity is to mount ~/.config/gcloud/ to the docker-user’s home directory.

Google Managed Computes

When running processes on Google Managed Computes including GCE, GKE, CloudFunctions, Cloud Build and other compute services, Google automatically manages a services account key (only usable by GCP), and behind the scene a Metadata Server (ref #6) on those machines to get an OAuth token. From there the SDK automatically grabs those tokens from Metadata Server to make the calls. This is the recommended way for using Service Accounts in GCP. When employing this method the process should run in the same project that hosts the service account.

Auto Detected Service Account Key

It is possible to use the GOOGLE_APPLICATION_CREDENTIALS environment variable to point to SA-Key on the file system. This is the middle ground between implicit and explicit identities. This method is extremely useful when the SA host project is different from the project or location that uses that service account. Datacenter deployments also can leverage this method. This method makes it easier for the application to find and use a given service account, but on the other hand a service account key needs to be available on the machine which should follow high sensitive data policies and also get rotated frequently. Switching the responsibility of key management from GCP to the operations team(s) may require additional work to follow organizational policies like secret management, auditing and key maintenance procedures.

Kubernetes Engine Workload Identity

Workload Identity is the recommended way to access Google Cloud services from within GKE due to its improved security properties and manageability. With Workload Identity, you configure a Kubernetes service account (KSA) to act as a Google service account (GSA). Any workload running as the KSA automatically authenticates as the GSA when accessing Google Cloud APIs. Please refer to GCP documentation for more details: https://cloud.google.com/kubernetes-engine/docs/how-to/workload-identity.

Explicitly (Service Account Keys)

In cases where the process is not running in GCP or running in different places other than identity’s host project, a ServiceAccount Key must be created by project admins. Creating SA key can be disabled by applying “Organization policies” in IAM (Ref #5).

Note: SA-Keys should be treated as very high sensitive data, and key leakage is a very high security risk.

Using SA-Key in Code

To use it in the application code, explicitly point to SA-Key file and use it or rely on ADC with Service Account Key and let Google SDKs perform their magic.

Example:

const {Storage} = require('@google-cloud/storage');

const options = { keyFilename: 'path/to/service_account.json',};

const storage = new Storage(options);storage.getBuckets()

Tracking SA-Key Usage

In “Service Account Key Usage Visibility” an article by Chris Leibl at https://bit.ly/2Ndjy2M , it describes how to generate reports for SA-Key usages. Which provides a great insight into which keys were used to access managed resources. But on the other hand if the SA and SA-Keys accessed resources in other projects which are not managed by the same org, those logs would show in target projects and there won’t be any trace of usage in the mentioned logs.

Take Away

Using Application Default Credential (ADC) is the most effective way to give GCP identities to the application. Final caution, DO NOT commit SA-Keys in code repos and leave them unprotected. Keep in mind that anyone with the SA-Key can use it to make GCP calls and there is no easy way to detect or stop that.

Final suggestion is to grant least privilege when setting IAM bindings. For example when an identity requires access to a single GCS bucket, grant that permission on the GCS bucket and avoid doing that at project level which will grant access to all buckets in that project.

References

- https://cloud.google.com/iam/docs

- https://cloud.google.com/storage/docs/access-control/lists#scopes

- https://cloud.google.com/kubernetes-engine/docs/how-to/workload-identity

- https://cloud.google.com/docs/authentication/production

- https://cloud.google.com/resource-manager/docs/organization-policy/overview

- https://cloud.google.com/compute/docs/storing-retrieving-metadata